In Trust & Safety, most decisions are made in minutes.

But their impact can last much longer.

A single click can limit a creator’s reach.

Apply a sensitive label.

Escalate a case to legal.

Or remove content entirely.

From the outside, it looks simple.

Inside the system, it’s rarely simple.

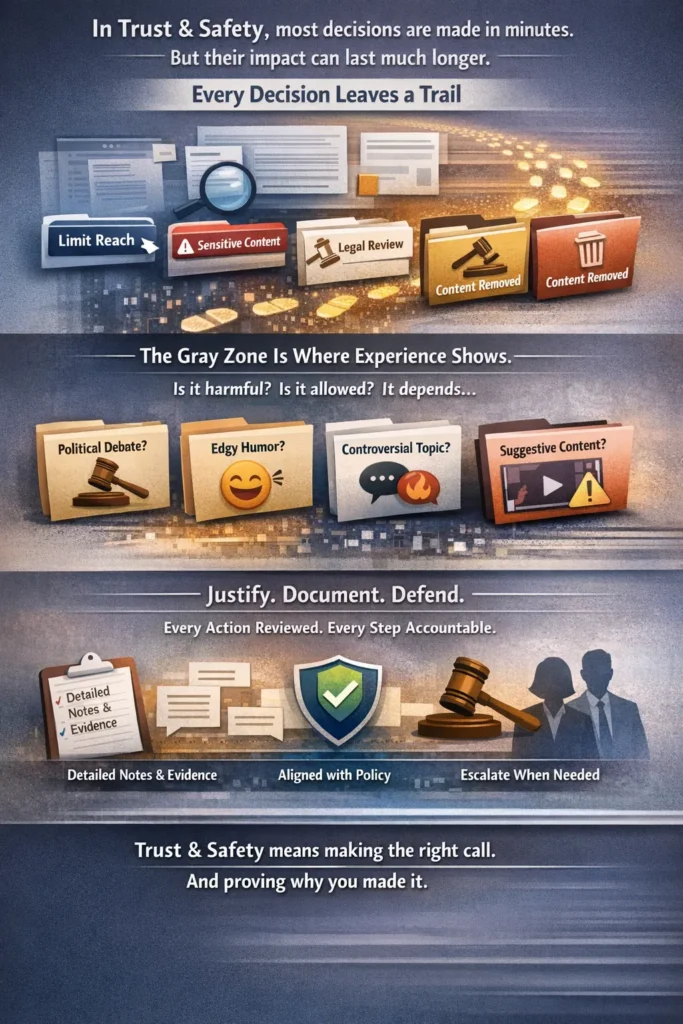

Every Decision Leaves a Trail

One thing you learn quickly in this field is that nothing is casual.

Every action must be justified.

Documented.

Aligned with policy.

Defensible.

You are not just reviewing content. You are creating a record.

If a creator appeals, your notes matter.

If leadership audits the case, your reasoning matters.

If regulators ever ask questions, your documentation matters.

This isn’t just about removing or labeling content. It’s about being accountable for why you did it.

Trust & Safety is not fast reaction work. It’s structured decision-making under pressure.

The Gray Zone Is Where Experience Shows

Clear violations are easy.

Explicit exploitation. Direct threats. Obvious hate speech.

Those cases don’t usually create debate.

The real challenge lives in the gray zone.

What about:

- A political discussion that borders on advocacy?

- A joke that could be interpreted as harassment?

- A debate about gender that feels inflammatory but avoids direct slurs?

- Third-party content with suggestive scenes but no explicit nudity?

This is where policy interpretation meets judgment.

And this is where experience separates a reviewer from a decision-maker.

You start realizing that policies don’t remove ambiguity. They guide you through it.

Volume vs. Accuracy

There is always pressure.

High queues. Tight SLAs. Performance metrics.

But speed without accuracy creates risk.

If you over-enforce, you damage creator trust.

If you under-enforce, you damage platform safety.

The balance is delicate.

Strong Trust & Safety teams understand that quality does not happen by accident. It requires:

- Clear escalation paths

- Regular calibration sessions

- Ongoing policy training

- Supportive leadership

Without structure, inconsistency creeps in. And inconsistency is one of the biggest threats to platform credibility.

Escalations Are Not Weakness

Early in my career, I saw how some reviewers avoided escalation.

They worried it would make them look unsure.

In reality, escalation protects everyone.

Cases involving political officials, sensitive identity-based discourse, emerging abuse patterns, or legal risk should never rely on a single interpretation.

Escalation is not failure. It’s governance in action.

It ensures decisions are consistent, defensible, and aligned at higher levels.

Leadership in Trust & Safety

As you grow in this field, your responsibilities shift.

You are no longer just reviewing content.

You’re coaching others on policy interpretation.

Monitoring trends across queues.

Identifying gaps in guidelines.

Supporting team well-being in high-exposure environments.

Leadership here requires emotional stability, fairness, and consistency.

Your team looks to you when cases are ambiguous.

Your tone influences enforcement standards.

Your decisions shape how policies are applied at scale.

It’s operational leadership, but it’s also ethical leadership.

The Responsibility Is Quiet, But Heavy

Trust & Safety doesn’t generate revenue directly.

It rarely receives public recognition.

But it protects the foundation.

Without strong enforcement systems:

Platforms lose credibility.

Users lose trust.

Brands lose confidence.

When moderation works properly, the platform feels balanced. Stable. Safe.

And most users will never know why.

That’s the invisible success of this work.