The first time I reviewed a “fuel shortage” rumour, it didn’t look dangerous.

It was a simple post. A blurry photo of a long queue at a petrol station. A caption claiming, “Fuel will run out by tonight. Fill your tanks now.”

No threats. No obvious violation. Just urgency.

But within a few hours, the situation changed.

From my experience in Trust & Safety, this is exactly how real-world panic often starts online.

It Begins With Something That Looks Real

Rumours don’t spread because they are dramatic. They spread because they feel believable.

In one case I worked on, the image attached to the post was genuine. There really was a queue at that petrol station. But the reason wasn’t a shortage. It was a temporary supply delay in that specific area.

The post removed that context.

What remained was a convincing narrative: fuel is running out.

That’s enough to trigger behavior.

Urgency Does the Rest

Once the first post gains traction, variations start appearing.

“Petrol pumps closing soon.”

“Government hiding the truth.”

“Last chance to refill.”

I’ve seen multiple versions of the same rumour appear within minutes, often copied, slightly modified, and reposted across accounts.

This is where the problem accelerates.

People don’t verify. They react.

Queues get longer. Photos of those queues get posted again. Those images then become “proof” of the original rumour.

A feedback loop forms.

Online panic starts creating offline reality.

Moderation Faces a Timing Problem

One of the biggest challenges in handling these situations is timing.

By the time content reaches moderation queues, it’s often already spreading fast.

I remember reviewing a cluster of posts during a similar situation. Individually, none of them contained explicit misinformation. They were mostly reshared images, personal warnings, or speculation.

But collectively, they were amplifying fear.

Moderation systems are designed to evaluate content piece by piece.

Rumours spread as patterns.

That gap makes response difficult.

The Role of Amplification

Another factor is how platforms amplify such content.

Posts that create urgency or fear often get higher engagement. More shares. More comments. More visibility.

I’ve seen relatively small accounts suddenly reach thousands of users simply because their post triggered panic.

At that point, the issue is no longer just about the content itself.

It’s about how widely it’s being pushed.

Why Simple Removal Isn’t Enough

Even when platforms take action, removing a few posts doesn’t stop the rumour.

New versions appear. Screenshots get reshared. Messages move to private groups or messaging apps.

In some cases, official clarifications come too late. By then, the public has already reacted.

From a Trust & Safety perspective, this is one of the hardest parts.

You’re not just dealing with misinformation.

You’re dealing with human behavior under uncertainty.

Final Thoughts

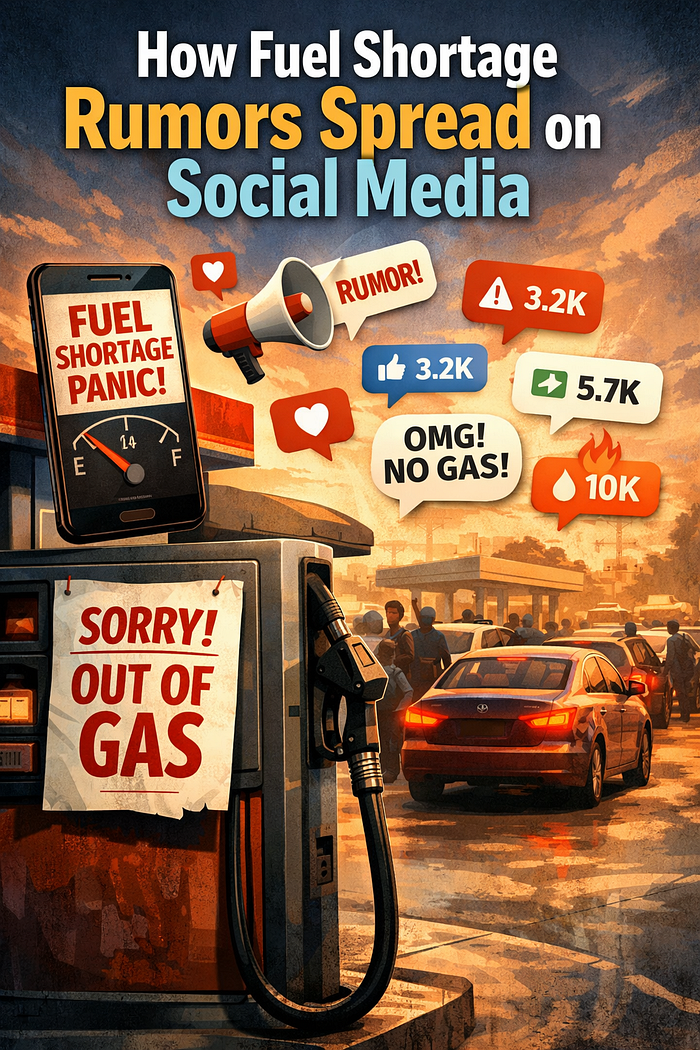

Fuel shortage rumours are a good example of how quickly online content can influence real-world actions.

A single misleading post can trigger panic buying, long queues, and actual supply pressure.

From the outside, it may look like overreaction.

From the inside, it’s a chain reaction driven by speed, amplification, and lack of context.

And by the time moderation catches up, the impact is often already visible offline.

That’s the reality of how rumours spread in today’s digital world.