Working in Trust and Safety changes the way you see social media. Most people experience platforms as users. They scroll, post, comment, and move on. But behind the scenes, there is a constant effort to identify harmful content at scale. That work is increasingly handled by automated moderation models.

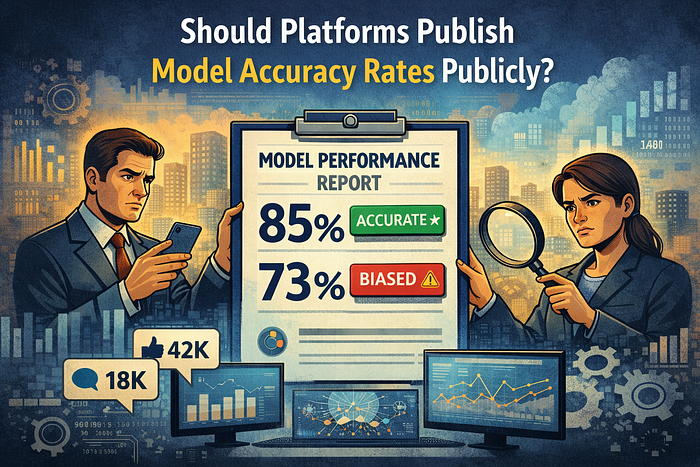

This raises an important question: Should platforms publicly share the accuracy of these models?

From my experience working in Trust and Safety operations, the answer is not as simple as it sounds.

The Reality of AI Moderation at Scale

Every day, millions of posts, videos, and comments move through automated moderation pipelines. AI systems scan content for signals related to harassment, exploitation, violent content, scams, and other policy violations.

Without automation, modern platforms simply could not function. The scale is too large for human reviewers alone.

But accuracy becomes critical.

Even a small percentage of mistakes can affect thousands or even millions of users. When models misclassify content, the impact spreads quickly across moderation queues and user experiences.

A Real Example From Moderation Operations

I remember a situation where an automated model suddenly began flagging a huge number of harmless posts.

The reason was subtle. The model had learned that certain keywords were commonly present in harmful content. Over time, it started treating those keywords as strong signals of violation.

The result was a surge of false positives.

Normal conversations, educational posts, and harmless discussions were flagged as violations. Within hours, the moderation queue was flooded with thousands of posts that needed manual review.

Meanwhile, actual harmful content was sitting in the queue longer than usual because human moderators were busy clearing the false alarms.

From the outside, users only saw that their content had been flagged or removed. What they never saw was the uncertainty inside the system making those decisions.

Why Transparency Could Help

This is why the discussion about publishing model accuracy rates is gaining attention.

Transparency could help build trust between platforms and users. If companies shared detection accuracy, false positive rates, and general performance metrics, it would help people understand the limits of automated moderation.

Researchers could study how moderation systems behave. Policymakers could make better informed decisions about platform responsibility. Even users might feel more confident if they understood that moderation decisions come from imperfect systems rather than invisible rules.

In many industries, transparency improves accountability.

Trust and Safety may eventually face the same expectation.

The Risk of Revealing Too Much

But there is another side to this conversation.

Publishing detailed model accuracy data can also help bad actors.

People who spread scams, abuse, or illegal content are constantly testing moderation systems. They experiment with different wording, formats, and posting patterns to find gaps.

If platforms publicly revealed exactly where models struggle, those weaknesses could become a guidebook for bypassing enforcement.

In Trust and Safety work, we see this kind of behavior frequently. Harmful actors adjust quickly. When they discover a small loophole, it spreads across networks almost immediately.

Transparency is valuable, but it can also create new risks.

Finding the Balance

The real challenge is not simply deciding whether to publish model accuracy rates. The challenge is how much information should be shared.

A balanced approach may work best.

Platforms could release high-level transparency reports showing overall detection accuracy, trends in false positives, and improvements in moderation systems. At the same time, they could avoid revealing specific signals or technical details that attackers could exploit.

This would allow platforms to demonstrate accountability while still protecting the integrity of their safety systems.

The Future of Trust in Moderation

After working in Trust and Safety, one thing becomes very clear. AI moderation is powerful, but it is far from perfect. Most failures do not appear dramatic. They happen quietly, often at massive scale.

As platforms rely more on automation, the pressure for transparency will continue to grow.

If companies expect users to trust automated enforcement decisions, they will eventually need to explain how those systems perform.

The difficult part will always be the same: finding the line between openness and security.

And in Trust and Safety, that line is rarely easy to draw.