(From Someone Who Works in Trust & Safety)

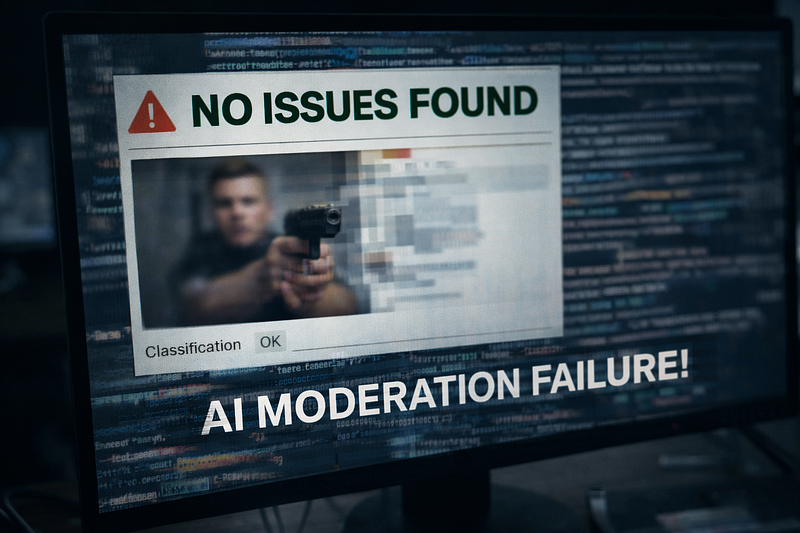

AI moderation is often described as scalable, efficient, and objective.

And to be fair, it is.

Without automation, modern platforms simply couldn’t function. The volume is too high. The velocity is too fast.

But after working in Trust & Safety operations, I’ve learned something important:

AI doesn’t usually fail in dramatic ways.

It fails quietly.

Systematically.

At scale.

And those quiet failures are the ones that matter most.

1. It Fails at Context

AI models are good at detecting patterns.

They are not good at understanding intent.

In real review queues, context is everything. A sentence alone rarely tells the full story. I’ve seen cases where:

- A post looked like hate speech but was quoting someone critically

- A phrase flagged as abusive was actually reclaimed language

- A harmless video clip was part of a larger harassment trend

AI sees probabilities. It matches signals.

Moderation requires interpretation.

And interpretation requires human judgment.

2. It Fails in Edge Cases

Automation works best on obvious violations.

Clear spam. Explicit imagery. Repetitive hate phrases.

But the real risk rarely sits in obvious content. It lives in the gray zone.

Subtle incitement.

Dog whistles.

Manipulated media.

Coordinated but indirect abuse.

These cases don’t always trigger strong signals. They require pattern recognition across behavior, history, and intent.

Even a small error rate in these categories becomes significant at scale.

That’s where the cracks begin to show.

3. It Fails Through Bias Amplification

This is one of the hardest realities to talk about.

AI systems are trained on historical data. If past enforcement patterns were uneven, the model can absorb and reproduce those patterns.

Certain dialects. Certain communities. Certain topics.

Not because they are inherently more harmful. But because the data associates them with higher risk.

The danger is that automation makes the outcome look neutral.

When a machine flags something, it feels objective. But models inherit the biases of the systems that trained them.

Without constant auditing, bias doesn’t disappear. It scales.

4. It Fails When Humans Over-Trust It

In high-volume environments, confidence scores matter.

When a system shows 95 percent confidence, it’s easy for reviewers to lean on that signal. Over time, this creates automation bias.

Instead of asking, “Does this align with policy?” the question becomes, “The model flagged it, so it must be right.”

That shift is subtle. But it changes decision quality.

AI should support human reasoning. It should not replace it psychologically.

5. It Fails at Transparency

From the outside, users often receive automated enforcement messages with little explanation.

No detailed reasoning.

No insight into thresholds.

Limited clarity around appeal outcomes.

When enforcement lacks transparency, it feels arbitrary.

Trust & Safety is not just about removing harmful content. It’s about maintaining legitimacy.

If users don’t understand why something happened, trust erodes.

6. It Fails at Emotional Protection

AI can reduce volume.

It cannot absorb emotional weight.

In practice, automation often filters obvious violations and leaves complex, ambiguous, or highly disturbing material for human review.

I’ve seen this pattern repeatedly.

The system becomes more efficient, but the human exposure becomes more concentrated.

Efficiency is not the same as protection.

7. The Real Failure Is Governance

The biggest weaknesses I’ve seen are not technical. They’re structural.

Who audits the models?

Who monitors bias drift over time?

Who defines enforcement thresholds?

Who ensures meaningful human oversight remains in place?

AI does exactly what it is designed to do.

The real question is whether the organization around it is mature enough to manage its power responsibly.

That’s a governance issue, not a coding issue.

Final Thoughts

AI moderation is necessary.

The scale of digital platforms demands automation.

But scale without accountability creates risk.

The future of moderation is not human versus machine.

It’s structured collaboration. Clear policy frameworks. Transparent enforcement logic. Strong auditing. Ethical oversight.

AI doesn’t fail because it’s flawed.

It fails when we expect it to replace human judgment instead of strengthening it.

And from where I sit in Trust & Safety, that distinction makes all the difference.